High Availability and Pacemaker 101!

In this blog post, we will talk a little bit about High Availability and a little bit more about Pacemaker. Here are some of the topics of this post:

-

Introduction to High Availability (HA) and Clustering.

-

Benefits of Highly Available applications.

-

How HA is implemented on Red Hat Enterprise Linux (RHEL) 7.

-

HA requirements on RHEL 7.

-

Demo: Building a 3 node Apache cluster.

Introduction to High Availability (HA) and Clustering.

What is High Availability (HA)?

In IT, High Availability refers to a system or component that is continuously operational for a desirably long length of time.

3 cores principles to HA

-

Elimination of single point of failures.

-

Reliable crossover.

-

Detection of failures as they occur.

How High Availability is implemented on RHEL 7?

CLUSTERING!

What is Clustering?

A cluster is a set of computers working together on a single task. Which task is performed, and how that task is performed, differs from cluster to cluster.

There are four (4) different kinds of clusters:

High-availability clusters: Known as an HA cluster or failover cluster, their function is to keep running services as available as they can be. You could find them in two main configurations:

- Active-Active (where a service runs on multiple nodes).

- Active-Passive (where a service only runs on one node at a time).

Load-balancing clusters: All nodes run the same software and perform the same task at the same time and the requests from the clients are distributed between all the nodes.

Compute clusters: Also know as high-performance computing (HPC) cluster. In these clusters, tasks are divided into smaller chunks, which then get computed on different nodes.

Storage clusters: All nodes provide a single cluster file system that will be used by clients to read and write data simultaneously.

Benefits of Highly Available applications

In two words: "Application resiliency".

- Apply patches.

- Planned outages.

- Unplanned outages due to failures (server, software, network, storage).

How HA is implemented on RHEL 7?

Red Hat Enterprise Linux High Availability Add-On.

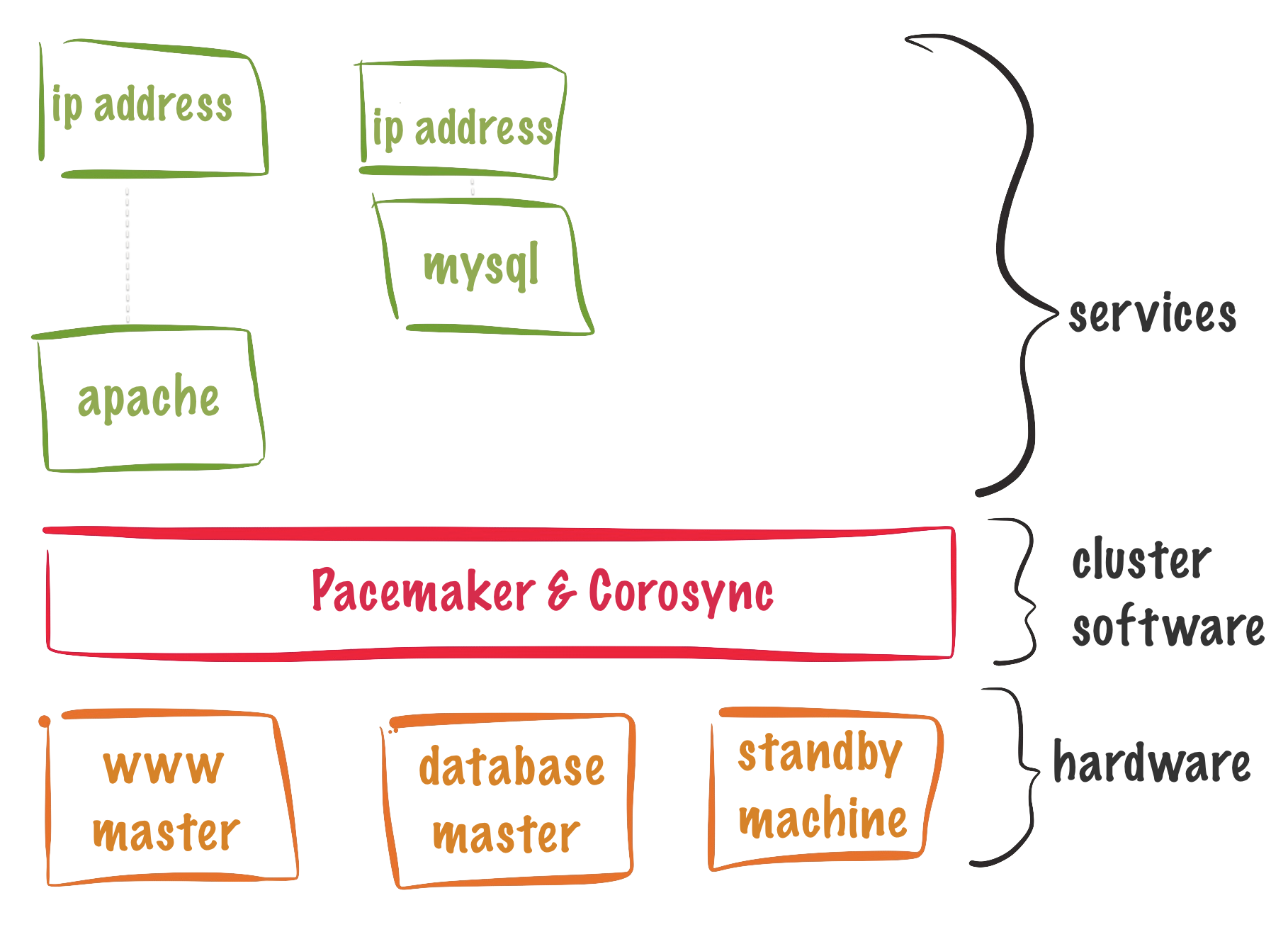

The High Availability Add-On consists of the following major components:

Cluster infrastructure: Provides fundamental functions for nodes to work together as a cluster: configuration file management, membership management, lock management, and fencing.

High-availability Service Management: Provides failover of services from one cluster node to another.

Cluster administration tools: Configuration and management tools for setting up, configuring, and management.

To provide the above services multiple

software components are required on the cluster nodes.

Software

The cluster infrastructure software is provided by Pacemaker and performs the next set of functions:

- Cluster management

- Lock management

- Fencing

- Cluster configuration management

Cluster software:

pacemaker: It's responsible for all cluster-related activities, such as monitoring cluster membership, managing the services and resources, and fencing cluster members. The RPM contains three (3) important components:

- Cluster Information Base (CIB).

- Cluster Resource Management Daemon (CRMd).

corosync: This is the framework used by Pacemaker for handling communication between the cluster nodes.

pcs: Provides a command-line interface to create, configure, and control every aspect of a Pacemaker/corosync cluster.

Requirements and Support.

Here are some requirements and limits for Pacemaker.

Number of Nodes:

- Up to 16 nodes per cluster.

- Minimum number of nodes: 3.

- 2 nodes cluster could be configured but is not recommended.

Cluster location:

Single site: A cluster setup where all cluster members are in the same physical location, connected by a local area network. (Supported).

Multisite: Two clusters, one active and one for disaster recovery. Failover for multisite clusters must be managed manually. (Supported).

Strech (or) Geo Clusters: Clusters stretched out over multiple physical locations. (Required architecture review to be supported).

Fencing:

Fencing is the process of cutting a node off from shared storage. This can be done by power cycling a node or disabling communication to the storage level.

WARNING: Fencing is required for all nodes in the cluster, either via power fencing, storage fencing, or a combination of both.

NOTE: If the cluster will use integrated fencing devices like ILO or DRAC, the systems acting as cluster nodes must power off immediately when a shutdown signal is received, instead of initiating a clean shutdown.

Virtualization

Virtual Machines supported as nodes and resources.

NOTE: VM as a resource means that virtualization host is participating in a cluster and the virtual machine is a resource that can move between cluster nodes.

Networking

Required:

- Multicast and IGMP (Internet Group Management Protocol).

- Gratuitous ARP used for floating IP Address.

Ports:

- 5405/UDP - corosync

- 2224/TCP - pcsd

- 3121/TCP - pacemaker

- 21064/TCP - dlm

RHN Channels

Required:

- rhel-7-server-rpms

- rhel-ha-for-rhel-7-server-rpms

Building a 3 node Apache cluster.

Now, we will build a basic pacemaker cluster serving Apache. To do this we will need 3 systems running RHEL 7.X.

Preparing the systems :

All these actions will happen on the all the cluster nodes.

Configure Firewall

Let's start by configuring FirewallD to allow traffic.

firewall-cmd --permanent --add-service=high-availability

firewall-cmd --reload

Install required software

yum install pcs fence-agents-all

The pcs package requires corosync and pacemaker, so all your software be installed by doing this._

Enable pcsd

pcsd provides cluster configuration sync and the web front end. Needs to be enable in all the servers.

systemctl enable pcsd; systemctl start pcsd

Set the ha cluster user password

After the software install, a user hacluster will be created. This user will be used for all cluster communication pcsd.

NOTE: You should use the same password across all cluster nodes for this user. If you echo your password like is show below, clear your history afterward :) .

echo password | passwd hacluster --stdin

Configuring DNS

You should be able to resolve all the nodes in the cluster by name. On this example, we are going to use host files to define our 3 nodes. This is what I added to my hosts files (/etc/hosts).

192.168.1.10 node1 node1.example.local

192.168.1.20 node2 node2.example.local

192.168.1.30 node3 node3.example.local

Preparing the systems

Authenticate pcsd.

pcsd requires that the cluster nodes authenticate, we are going to use the ha cluster user and password. This actions only needs to happen on one of the nodes.

[root@node1] pcs cluster auth node1.example.local node2.example.local node3.example.local

Creating the cluster

Let's create the cluster:

pcs cluster setup --name demo-cluster --start node1.example.local node2.example.local node3.example.local

An important step will be to enable the cluster services on all nodes. By default, if a node is rebooted will not join the cluster until started manually. To avoid this do:

[root@node1] pcs cluster enable --all

Check the cluster status:

[root@node1] pcs cluster status

Configuring Fencing

This is a critical step, you must have fencing on the cluster! In this example, I am assuming that we are using KVM for the demo and we will use fence_xvm.

[root@node1] pcs stonith create fence_node1_vm fence_xvm port="node1" pcmk_host_list="node1.example.local"

[root@node1] pcs stonith create fence_node2_vm fence_xvm port="node2" pcmk_host_list="node2.example.local"

[root@node1] pcs stonith create fence_node3_vm fence_xvm port="node3" pcmk_host_list="node3.example.local"

Open the port for the fencing agent fencing:

[root@node1] for i in `seq 1 3`; do ssh root@node$i.example.local firewall-cmd --add-port=1229/tcp --permanent; done

[root@node1] for i in `seq 1 3`; do ssh root@node$i.example.local firewall-cmd reload; done

Check fencing status.

[root@node1] pcs stonith show

Resources

Clustered services consist of one or more resources. A resource can be:

- IP address

- file system

- Service (example: httpd)

Also usually the resources are a member of resource groups.

Creating the resources for our demo-cluster

First let's create the resource group for our Apache cluster, we are going to name it personal-web and will have a floating IP.

[root@node1] pcs resource create floatingip IPaddr2 ip=192.168.1.254 cidr_netmask=24 --group personal-web

Install Apache (httpd)

[root@node1] yum install httpd -y

Create the web-1 resource using Apache and put it on the personal-web group.

[root@node1] pcs resource create web-1 apache --group personal-web

Let's check that the created resources are present in the cluster configuration and check the cluster status.

[root@node1] pcs resource show

[root@node1] pcs status

Create a file in /var/www/html/ with this content:

echo "Website responding from $HOSTNAME" > /var/www/html/index.html

Manipulating the cluster

Here are some commands that will help you to manage the cluster.

Start and Stop the cluster

to do it in all nodes use the --all switch.

[root@node1] pcs cluster start

[root@node1] pcs cluster stop

Stop the cluster service on a specific remote node

[root@node1] pcs cluster stop node2.example.com

Disable cluster on reboot on a node

[root@node1] pcs cluster disable

How to add a node to the cluster

[root@node1] pcs cluster node add new.example.com

On the new node, you need to Authenticate the rest of the cluster. Also, you will need to add a fence device for it too.

[root@new] pcs cluster auth

How to remove a node to the cluster

[root@node1] pcs cluster node remove new.example.com

[root@node1] pcs stonith remove fence_newnode new.example.com

Set a node in standby

(This bans the node from hosting resources).

[root@node1] pcs cluster node standby new.example.com

Set the cluster in standby

[root@node1] pcs cluster standby --all

Unset standby

[root@node1] pcs cluster unstandby --all

Displaying quorum status

[root@node1] corosync-quorumtool

Documentation

Slides:

https://github.com/juliovp01/txlf-2015-HA-slides

High Availability Add-On overview: https://access.redhat.com/documentation/en-US/Red_Hat_Enterprise_Linux/7/html/High_Availability_Add-On_Overview/

Cluster Labs website:

http://clusterlabs.org/